The generative AI revolution embodied in instruments like ChatGPT, Midjourney, and lots of others is at its core based mostly on a easy components: Take a really giant neural community, prepare it on an enormous dataset scraped from the Net, after which use it to satisfy a broad vary of person requests. Giant language fashions (LLMs) can reply questions, write code, and spout poetry, whereas image-generating programs can create convincing cave work or modern artwork.

So why haven’t these wonderful AI capabilities translated into the sorts of useful and broadly helpful robots we’ve seen in science fiction? The place are the robots that may clear off the desk, fold your laundry, and make you breakfast?

Sadly, the extremely profitable generative AI components—huge fashions educated on a lot of Web-sourced knowledge—doesn’t simply carry over into robotics, as a result of the Web shouldn’t be stuffed with robotic-interaction knowledge in the identical manner that it’s stuffed with textual content and pictures. Robots want robotic knowledge to study from, and this knowledge is usually created slowly and tediously by researchers in laboratory environments for very particular duties. Regardless of large progress on robot-learning algorithms, with out ample knowledge we nonetheless can’t allow robots to carry out real-world duties (like making breakfast) outdoors the lab. Probably the most spectacular outcomes usually solely work in a single laboratory, on a single robotic, and sometimes contain solely a handful of behaviors.

If the skills of every robotic are restricted by the effort and time it takes to manually educate it to carry out a brand new activity, what if we have been to pool collectively the experiences of many robots, so a brand new robotic might study from all of them directly? We determined to present it a strive. In 2023, our labs at Google and the College of California, Berkeley got here along with 32 different robotics laboratories in North America, Europe, and Asia to undertake the

RT-X venture, with the aim of assembling knowledge, assets, and code to make general-purpose robots a actuality.

Here’s what we discovered from the primary part of this effort.

The right way to create a generalist robotic

People are much better at this type of studying. Our brains can, with somewhat observe, deal with what are basically adjustments to our physique plan, which occurs once we decide up a software, journey a bicycle, or get in a automotive. That’s, our “embodiment” adjustments, however our brains adapt. RT-X is aiming for one thing related in robots: to allow a single deep neural community to manage many alternative varieties of robots, a functionality referred to as cross-embodiment. The query is whether or not a deep neural community educated on knowledge from a sufficiently giant variety of completely different robots can study to “drive” all of them—even robots with very completely different appearances, bodily properties, and capabilities. If that’s the case, this method might doubtlessly unlock the ability of huge datasets for robotic studying.

The size of this venture may be very giant as a result of it needs to be. The RT-X dataset at present comprises practically 1,000,000 robotic trials for 22 varieties of robots, together with lots of the mostly used robotic arms available on the market. The robots on this dataset carry out an enormous vary of behaviors, together with choosing and inserting objects, meeting, and specialised duties like cable routing. In whole, there are about 500 completely different expertise and interactions with hundreds of various objects. It’s the most important open-source dataset of actual robotic actions in existence.

Surprisingly, we discovered that our multirobot knowledge could possibly be used with comparatively easy machine-learning strategies, offered that we comply with the recipe of utilizing giant neural-network fashions with giant datasets. Leveraging the identical sorts of fashions utilized in present LLMs like ChatGPT, we have been in a position to prepare robot-control algorithms that don’t require any particular options for cross-embodiment. Very like an individual can drive a automotive or journey a bicycle utilizing the identical mind, a mannequin educated on the RT-X dataset can merely acknowledge what sort of robotic it’s controlling from what it sees within the robotic’s personal digital camera observations. If the robotic’s digital camera sees a

UR10 industrial arm, the mannequin sends instructions applicable to a UR10. If the mannequin as an alternative sees a low-cost WidowX hobbyist arm, the mannequin strikes it accordingly.

To check the capabilities of our mannequin, 5 of the laboratories concerned within the RT-X collaboration every examined it in a head-to-head comparability towards the most effective management system they’d developed independently for their very own robotic. Every lab’s check concerned the duties it was utilizing for its personal analysis, which included issues like choosing up and shifting objects, opening doorways, and routing cables by way of clips. Remarkably, the one unified mannequin offered improved efficiency over every laboratory’s personal finest technique, succeeding on the duties about 50 % extra typically on common.

Whereas this end result may appear stunning, we discovered that the RT-X controller might leverage the various experiences of different robots to enhance robustness in numerous settings. Even throughout the identical laboratory, each time a robotic makes an attempt a activity, it finds itself in a barely completely different scenario, and so drawing on the experiences of different robots in different conditions helped the RT-X controller with pure variability and edge circumstances. Listed below are a couple of examples of the vary of those duties:

Constructing robots that may purpose

Inspired by our success with combining knowledge from many robotic sorts, we subsequent sought to analyze how such knowledge will be integrated right into a system with extra in-depth reasoning capabilities. Advanced semantic reasoning is difficult to study from robotic knowledge alone. Whereas the robotic knowledge can present a spread of

bodily capabilities, extra complicated duties like “Transfer apple between can and orange” additionally require understanding the semantic relationships between objects in a picture, fundamental widespread sense, and different symbolic data that isn’t instantly associated to the robotic’s bodily capabilities.

So we determined so as to add one other large supply of information to the combo: Web-scale picture and textual content knowledge. We used an current giant vision-language mannequin that’s already proficient at many duties that require some understanding of the connection between pure language and pictures. The mannequin is much like those accessible to the general public similar to ChatGPT or

Bard. These fashions are educated to output textual content in response to prompts containing pictures, permitting them to unravel issues similar to visible question-answering, captioning, and different open-ended visible understanding duties. We found that such fashions will be tailored to robotic management just by coaching them to additionally output robotic actions in response to prompts framed as robotic instructions (similar to “Put the banana on the plate”). We utilized this method to the robotics knowledge from the RT-X collaboration.

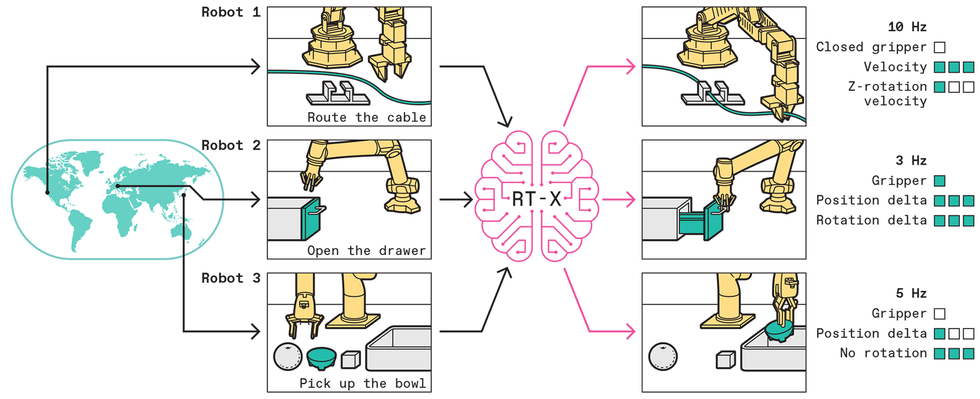

The RT-X mannequin makes use of pictures or textual content descriptions of particular robotic arms doing completely different duties to output a sequence of discrete actions that may enable any robotic arm to do these duties. By accumulating knowledge from many robots doing many duties from robotics labs around the globe, we’re constructing an open-source dataset that can be utilized to show robots to be usually helpful.Chris Philpot

The RT-X mannequin makes use of pictures or textual content descriptions of particular robotic arms doing completely different duties to output a sequence of discrete actions that may enable any robotic arm to do these duties. By accumulating knowledge from many robots doing many duties from robotics labs around the globe, we’re constructing an open-source dataset that can be utilized to show robots to be usually helpful.Chris Philpot

To judge the mixture of Web-acquired smarts and multirobot knowledge, we examined our RT-X mannequin with Google’s cellular manipulator robotic. We gave it our hardest generalization benchmark exams. The robotic needed to acknowledge objects and efficiently manipulate them, and it additionally had to answer complicated textual content instructions by making logical inferences that required integrating data from each textual content and pictures. The latter is among the issues that make people such good generalists. May we give our robots no less than a touch of such capabilities?

Even with out particular coaching, this Google analysis robotic is ready to comply with the instruction “transfer apple between can and orange.” This functionality is enabled by RT-X, a big robotic manipulation dataset and step one in the direction of a normal robotic mind.

We carried out two units of evaluations. As a baseline, we used a mannequin that excluded all the generalized multirobot RT-X knowledge that didn’t contain Google’s robotic. Google’s robot-specific dataset is in actual fact the most important a part of the RT-X dataset, with over 100,000 demonstrations, so the query of whether or not all the opposite multirobot knowledge would really assist on this case was very a lot open. Then we tried once more with all that multirobot knowledge included.

In one of the tough analysis situations, the Google robotic wanted to perform a activity that concerned reasoning about spatial relations (“Transfer apple between can and orange”); in one other activity it needed to clear up rudimentary math issues (“Place an object on prime of a paper with the answer to ‘2+3’”). These challenges have been meant to check the essential capabilities of reasoning and drawing conclusions.

On this case, the reasoning capabilities (such because the which means of “between” and “on prime of”) got here from the Net-scale knowledge included within the coaching of the vision-language mannequin, whereas the flexibility to floor the reasoning outputs in robotic behaviors—instructions that truly moved the robotic arm in the appropriate path—got here from coaching on cross-embodiment robotic knowledge from RT-X. Some examples of evaluations the place we requested the robots to carry out duties not included of their coaching knowledge are proven beneath.Whereas these duties are rudimentary for people, they current a significant problem for general-purpose robots. With out robotic demonstration knowledge that clearly illustrates ideas like “between,” “close to,” and “on prime of,” even a system educated on knowledge from many alternative robots wouldn’t have the ability to work out what these instructions imply. By integrating Net-scale data from the vision-language mannequin, our full system was in a position to clear up such duties, deriving the semantic ideas (on this case, spatial relations) from Web-scale coaching, and the bodily behaviors (choosing up and shifting objects) from multirobot RT-X knowledge. To our shock, we discovered that the inclusion of the multirobot knowledge improved the Google robotic’s potential to generalize to such duties by an element of three. This end result means that not solely was the multirobot RT-X knowledge helpful for buying a wide range of bodily expertise, it might additionally assist to higher join such expertise to the semantic and symbolic data in vision-language fashions. These connections give the robotic a level of widespread sense, which might at some point allow robots to grasp the which means of complicated and nuanced person instructions like “Deliver me my breakfast” whereas finishing up the actions to make it occur.

The subsequent steps for RT-X

The RT-X venture reveals what is feasible when the robot-learning group acts collectively. Due to this cross-institutional effort, we have been in a position to put collectively a various robotic dataset and perform complete multirobot evaluations that wouldn’t be potential at any single establishment. For the reason that robotics group can’t depend on scraping the Web for coaching knowledge, we have to create that knowledge ourselves. We hope that extra researchers will contribute their knowledge to the

RT-X database and be a part of this collaborative effort. We additionally hope to supply instruments, fashions, and infrastructure to assist cross-embodiment analysis. We plan to transcend sharing knowledge throughout labs, and we hope that RT-X will develop right into a collaborative effort to develop knowledge requirements, reusable fashions, and new methods and algorithms.

Our early outcomes trace at how giant cross-embodiment robotics fashions might rework the sector. A lot as giant language fashions have mastered a variety of language-based duties, sooner or later we’d use the identical basis mannequin as the idea for a lot of real-world robotic duties. Maybe new robotic expertise could possibly be enabled by fine-tuning and even prompting a pretrained basis mannequin. In an analogous option to how one can immediate ChatGPT to inform a narrative with out first coaching it on that exact story, you can ask a robotic to jot down “Blissful Birthday” on a cake with out having to inform it how you can use a piping bag or what handwritten textual content appears like. After all, way more analysis is required for these fashions to tackle that type of normal functionality, as our experiments have targeted on single arms with two-finger grippers doing easy manipulation duties.

As extra labs have interaction in cross-embodiment analysis, we hope to additional push the frontier on what is feasible with a single neural community that may management many robots. These advances would possibly embody including various simulated knowledge from generated environments, dealing with robots with completely different numbers of arms or fingers, utilizing completely different sensor suites (similar to depth cameras and tactile sensing), and even combining manipulation and locomotion behaviors. RT-X has opened the door for such work, however probably the most thrilling technical developments are nonetheless forward.

That is only the start. We hope that with this primary step, we are able to collectively create the way forward for robotics: the place normal robotic brains can energy any robotic, benefiting from knowledge shared by all robots around the globe.

From Your Web site Articles

Associated Articles Across the Net