Generative AI is immediately’s buzziest type of synthetic intelligence, and it’s what powers chatbots like ChatGPT, Ernie, LLaMA, Claude, and Cohere—in addition to picture mills like DALL-E 2, Steady Diffusion, Adobe Firefly, and Midjourney. Generative AI is the department of AI that permits machines to be taught patterns from huge datasets after which to autonomously produce new content material primarily based on these patterns. Though generative AI is pretty new, there are already many examples of fashions that may produce textual content, photos, movies, and audio.

Many so-called

basis fashions have been skilled on sufficient information to be competent in all kinds of duties. For instance, a big language mannequin can generate essays, laptop code, recipes, protein buildings, jokes, medical diagnostic recommendation, and rather more. It might probably additionally theoretically generate directions for constructing a bomb or making a bioweapon, although safeguards are supposed to stop such sorts of misuse.

What’s the distinction between AI, machine studying, and generative AI?

Synthetic intelligence (AI) refers to all kinds of computational approaches to mimicking human intelligence.

Machine studying (ML) is a subset of AI; it focuses on algorithms that allow programs to be taught from information and enhance their efficiency. Earlier than generative AI got here alongside, most ML fashions realized from datasets to carry out duties equivalent to classification or prediction. Generative AI is a specialised sort of ML involving fashions that carry out the duty of producing new content material, venturing into the realm of creativity.

What architectures do generative AI fashions use?

Generative fashions are constructed utilizing a wide range of neural community architectures—basically the design and construction that defines how the mannequin is organized and the way info flows by it. A number of the most well-known architectures are

variational autoencoders (VAEs), generative adversarial networks (GANs), and transformers. It’s the transformer structure, first proven on this seminal 2017 paper from Google, that powers immediately’s giant language fashions. Nonetheless, the transformer structure is much less suited to different sorts of generative AI, equivalent to picture and audio technology.

Autoencoders be taught environment friendly representations of knowledge by an

encoder-decoder framework. The encoder compresses enter information right into a lower-dimensional house, referred to as the latent (or embedding) house, that preserves probably the most important elements of the information. A decoder can then use this compressed illustration to reconstruct the unique information. As soon as an autoencoder has been skilled on this method, it may possibly use novel inputs to generate what it considers the suitable outputs. These fashions are sometimes deployed in image-generation instruments and have additionally discovered use in drug discovery, the place they can be utilized to generate new molecules with desired properties.

With generative adversarial networks (GANs), the coaching includes a

generator and a discriminator that may be thought-about adversaries. The generator strives to create real looking information, whereas the discriminator goals to differentiate between these generated outputs and actual “floor fact” outputs. Each time the discriminator catches a generated output, the generator makes use of that suggestions to attempt to enhance the standard of its outputs. However the discriminator additionally receives suggestions on its efficiency. This adversarial interaction leads to the refinement of each elements, resulting in the technology of more and more authentic-seeming content material. GANs are finest identified for creating deepfakes, however can be used for extra benign types of picture technology and plenty of different functions.

The transformer is arguably the reigning champion of generative AI architectures for its ubiquity in immediately’s highly effective giant language fashions (LLMs). Its power lies in its consideration mechanism, which permits the mannequin to give attention to totally different components of an enter sequence whereas making predictions. Within the case of language fashions, the enter consists of strings of phrases that make up sentences, and the transformer predicts what phrases will come subsequent (we’ll get into the small print beneath). As well as, transformers can course of all the weather of a sequence in parallel moderately than marching by it from starting to finish, as earlier sorts of fashions did; this

parallelization makes coaching quicker and extra environment friendly. When builders added huge datasets of textual content for transformer fashions to be taught from, immediately’s outstanding chatbots emerged.

How do giant language fashions work?

A transformer-based LLM is skilled by giving it an unlimited dataset of textual content to be taught from. The eye mechanism comes into play because it processes sentences and appears for patterns. By all of the phrases in a sentence directly, it step by step begins to grasp which phrases are mostly discovered collectively, and which phrases are most vital to the which means of the sentence. It learns this stuff by making an attempt to foretell the subsequent phrase in a sentence and evaluating its guess to the bottom fact. Its errors act as suggestions indicators that trigger the mannequin to regulate the weights it assigns to numerous phrases earlier than it tries once more.

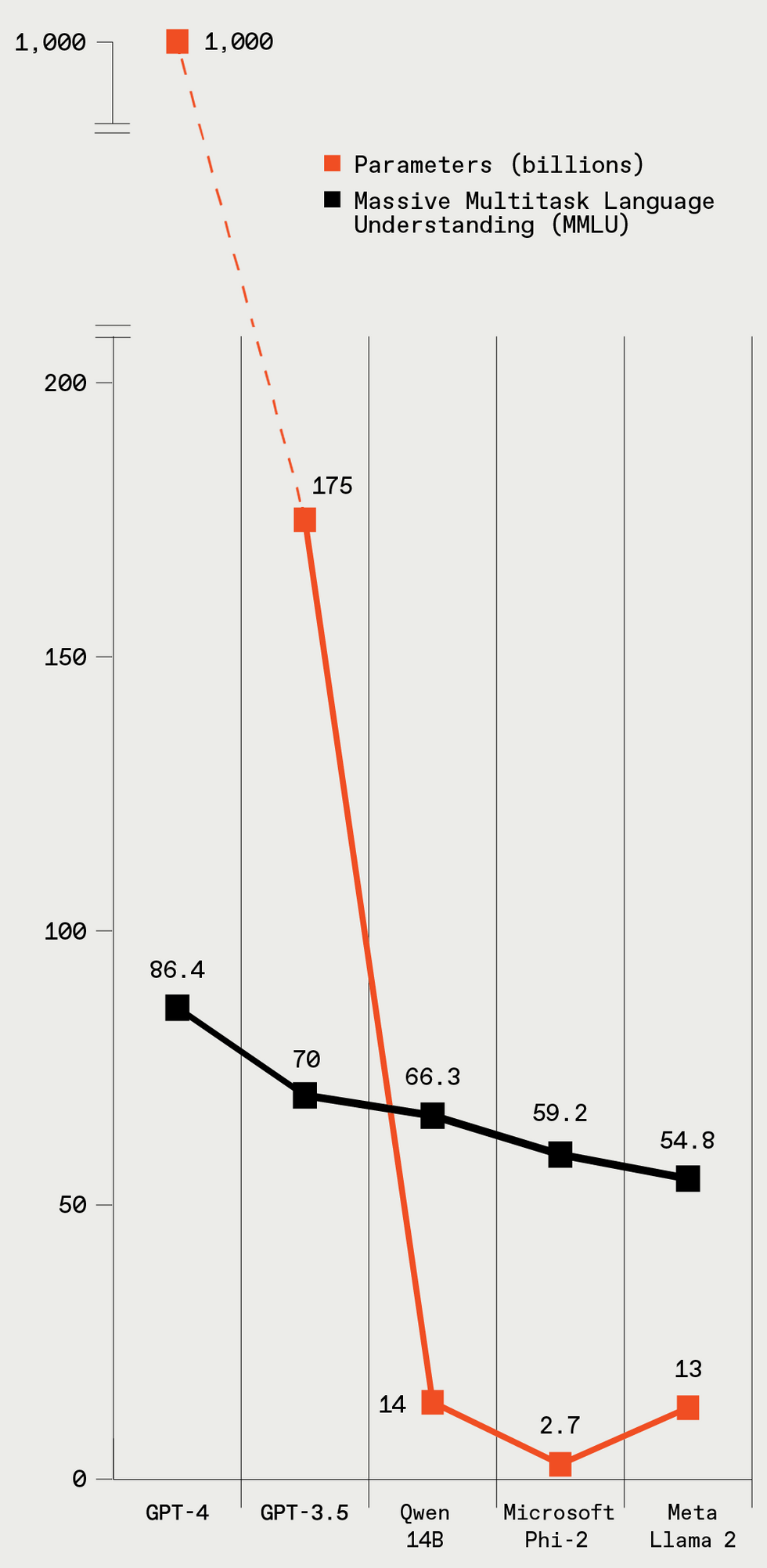

These 5 LLMs fluctuate vastly in dimension (given in parameters), and the bigger fashions have higher efficiency on a regular LLM benchmark take a look at. IEEE Spectrum

These 5 LLMs fluctuate vastly in dimension (given in parameters), and the bigger fashions have higher efficiency on a regular LLM benchmark take a look at. IEEE Spectrum

To elucidate the coaching course of in barely extra technical phrases, the textual content within the coaching information is damaged down into components referred to as

tokens, that are phrases or items of phrases—however for simplicity’s sake, let’s say all tokens are phrases. Because the mannequin goes by the sentences in its coaching information and learns the relationships between tokens, it creates a listing of numbers, referred to as a vector, for every one. All of the numbers within the vector signify numerous elements of the phrase: its semantic meanings, its relationship to different phrases, its frequency of use, and so forth. Related phrases, like elegant and fancy, can have comparable vectors, and also will be close to one another within the vector house. These vectors are referred to as phrase embeddings. The parameters of a LLM embody the weights related to all of the phrase embeddings and the eye mechanism. GPT-4, the OpenAI mannequin that’s thought-about the present champion, is rumored to have greater than 1 trillion parameters.

Given sufficient information and coaching time, the LLM begins to grasp the subtleties of language. Whereas a lot of the coaching includes textual content sentence by sentence, the eye mechanism additionally captures relationships between phrases all through an extended textual content sequence of many paragraphs. As soon as an LLM is skilled and is prepared to be used, the eye mechanism remains to be in play. When the mannequin is producing textual content in response to a immediate, it’s utilizing its predictive powers to determine what the subsequent phrase must be. When producing longer items of textual content, it predicts the subsequent phrase within the context of all of the phrases it has written to this point; this perform will increase the coherence and continuity of its writing.

Why do giant language fashions hallucinate?

You might have heard that LLMs typically “

hallucinate.” That’s a well mannered strategy to say they make stuff up very convincingly. A mannequin typically generates textual content that matches the context and is grammatically right, but the fabric is misguided or nonsensical. This unhealthy behavior stems from LLMs coaching on huge troves of knowledge drawn from the Web, loads of which isn’t factually correct. For the reason that mannequin is solely making an attempt to foretell the subsequent phrase in a sequence primarily based on what it has seen, it might generate plausible-sounding textual content that has no grounding in actuality.

Why is generative AI controversial?

One supply of controversy for generative AI is the provenance of its coaching information. Most AI corporations that prepare giant fashions to generate textual content, photos, video, and audio have

not been clear concerning the content material of their coaching datasets. Numerous leaks and experiments have revealed that these datasets embody copyrighted materials equivalent to books, newspaper articles, and films. A quantity of lawsuits are underway to find out whether or not use of copyrighted materials for coaching AI programs constitutes honest use, or whether or not the AI corporations must pay the copyright holders to be used of their materials.

On a associated be aware, many individuals are involved that the widespread use of generative AI will take jobs away from artistic people who make artwork, music, written works, and so forth. And likewise, presumably, from people who do a variety of white-collar jobs, together with translators, paralegals, customer-service representatives, and journalists. There have already been just a few

troubling layoffs, however it’s laborious to say but whether or not generative AI will likely be dependable sufficient for large-scale enterprise functions. (See above about hallucinations.)

Lastly, there’s the hazard that generative AI will likely be used to make unhealthy stuff. And there are after all many classes of unhealthy stuff they may theoretically be used for. Generative AI can be utilized for customized scams and phishing assaults: For instance, utilizing “voice cloning,” scammers can

copy the voice of a selected particular person and name the particular person’s household with a plea for assist (and cash). All codecs of generative AI—textual content, audio, picture, and video—can be utilized to generate misinformation by creating plausible-seeming representations of issues that by no means occurred, which is a very worrying chance with regards to elections. (In the meantime, as Spectrum reported this week, the U.S. Federal Communications Fee has responded by outlawing AI-generated robocalls.) Picture- and video-generating instruments can be utilized to provide nonconsensual pornography, though the instruments made by mainstream corporations disallow such use. And chatbots can theoretically stroll a would-be terrorist by the steps of constructing a bomb, nerve fuel, and a bunch of different horrors. Though the massive LLMs have safeguards to stop such misuse, some hackers enjoyment of circumventing these safeguards. What’s extra, “uncensored” variations of open-source LLMs are on the market.

Regardless of such potential issues, many individuals suppose that generative AI may make folks extra productive and may very well be used as a device to allow totally new types of creativity. We’ll probably see each disasters and artistic flowerings and many else that we don’t anticipate. However understanding the fundamentals of how these fashions work is more and more essential for tech-savvy folks immediately. As a result of irrespective of how refined these programs develop, it’s the people’ job to maintain them working, make the subsequent ones higher, and with a bit of luck, assist folks out too.