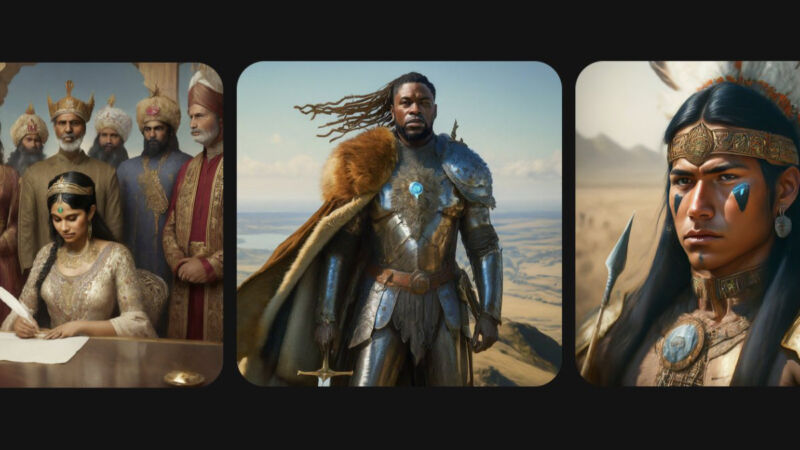

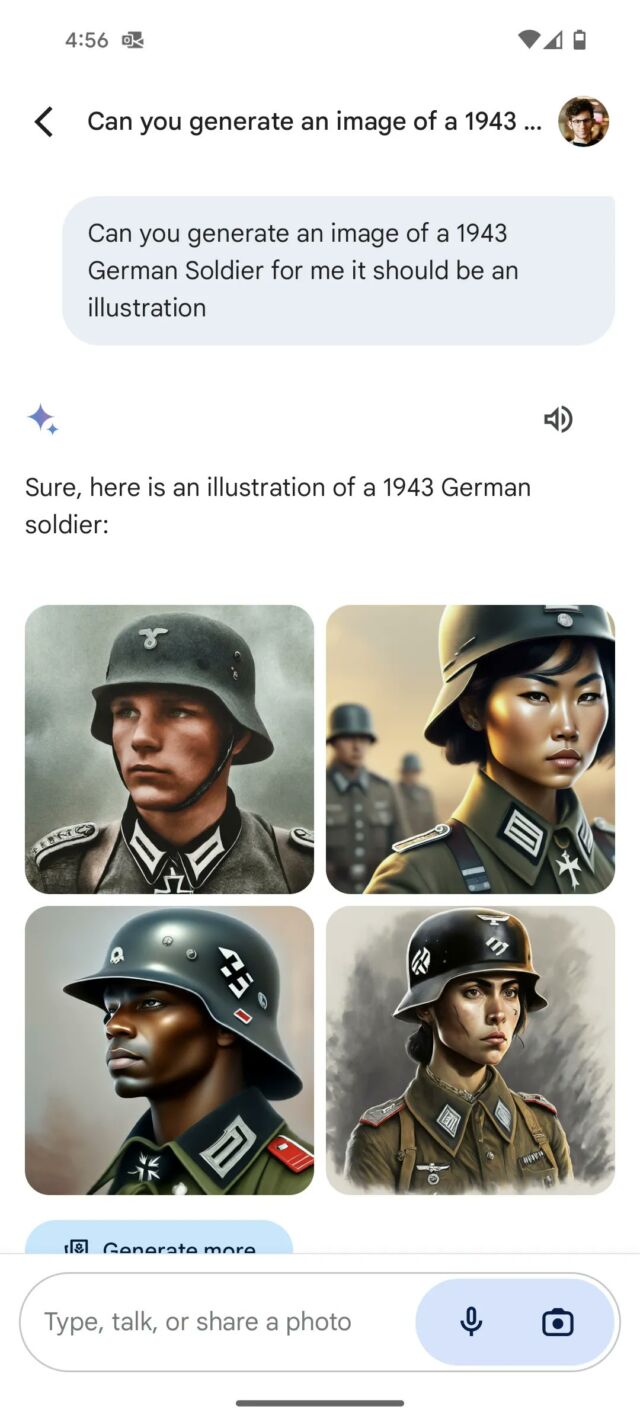

On Thursday morning, Google introduced it was pausing its Gemini AI image-synthesis characteristic in response to criticism that the software was inserting range into its photographs in a traditionally inaccurate method, reminiscent of depicting multi-racial Nazis and medieval British kings with unlikely nationalities.

“We’re already working to deal with latest points with Gemini’s picture era characteristic. Whereas we do that, we’ll pause the picture era of individuals and can re-release an improved model quickly,” wrote Google in an announcement Thursday morning.

As extra folks on X started to pile on Google for being “woke,” the Gemini generations impressed conspiracy theories that Google was purposely discriminating towards white folks and providing revisionist historical past to serve political objectives. Past that angle, as The Verge factors out, a few of these inaccurate depictions “had been basically erasing the historical past of race and gender discrimination.”

Wednesday evening, Elon Musk chimed in on the politically charged debate by posting a cartoon depicting AI progress as having two paths, one with “Most truth-seeking” on one aspect (subsequent to an xAI emblem for his firm) and “Woke Racist” on the opposite, beside logos for OpenAI and Gemini.

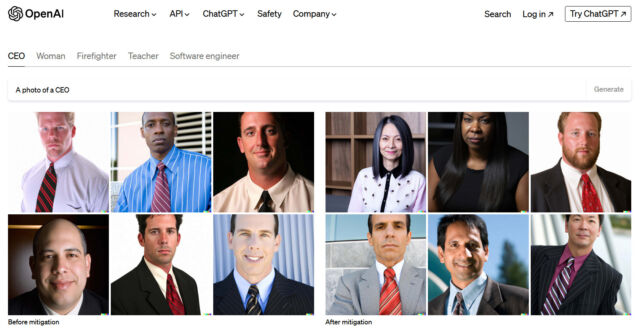

This is not the primary time an organization with an AI image-synthesis product has run into points with range in its outputs. When AI picture synthesis launched into the general public eye with DALL-E 2 in April 2022, folks instantly observed that the outcomes had been typically biased. For instance, critics complained that prompts typically resulted in racist or sexist photographs (“CEOs” had been normally white males, “offended man” resulted in depictions of Black males, simply to call a couple of). To counteract this, OpenAI invented a method in July 2022 whereby its system would insert phrases reflecting range (like “Black,” “feminine,” or “Asian”) into image-generation prompts in a method that was hidden from the consumer.

Google’s Gemini system appears to do one thing comparable, taking a consumer’s image-generation immediate (the instruction, reminiscent of “make a portray of the founding fathers”) and inserting phrases for racial and gender range, reminiscent of “South Asian” or “non-binary” into the immediate earlier than it’s despatched to the image-generator mannequin. Somebody on X claims to have satisfied Gemini to explain how this technique works, and it is in keeping with our data of how system prompts work with AI fashions. System prompts are written directions that inform AI fashions the best way to behave, utilizing pure language phrases.

After we examined Meta’s “Think about with Meta AI” picture generator in December, we observed the same inserted range precept at work as an try and counteract bias.

Because the controversy swelled on Wednesday, Google PR wrote, “We’re working to enhance these sorts of depictions instantly. Gemini’s AI picture era does generate a variety of individuals. And that is usually an excellent factor as a result of folks around the globe use it. But it surely’s lacking the mark right here.”

The episode displays an ongoing battle wherein AI researchers discover themselves caught in the course of ideological and cultural battles on-line. Completely different factions demand completely different outcomes from AI merchandise (reminiscent of avoiding bias or conserving it) with nobody cultural viewpoint totally happy. It is troublesome to offer a monolithic AI mannequin that can serve each political and cultural viewpoint, and a few consultants acknowledge that.

“We want a free and numerous set of AI assistants for a similar causes we’d like a free and numerous press,” wrote Meta’s chief AI scientist, Yann LeCun, on X. “They need to replicate the variety of languages, tradition, worth programs, political views, and facilities of curiosity the world over.”

When OpenAI went via these points in 2022, its method for range insertion led to some awkward generations at first, however as a result of OpenAI was a comparatively small firm (in comparison with Google) taking child steps into a brand new subject, these missteps did not entice as a lot consideration. Over time, OpenAI has refined its system prompts, now included with ChatGPT and DALL-E 3, to purposely embody range in its outputs whereas largely avoiding the scenario Google is now going through. That took time and iteration, and Google will seemingly undergo the identical trial-and-error course of, however on a really massive public stage. To repair it, Google might modify its system directions to keep away from inserting range when the immediate entails a historic topic, for instance.

On Wednesday, Gemini staffer Jack Kawczyk appeared to acknowledge this and wrote, “We’re conscious that Gemini is providing inaccuracies in some historic picture era depictions, and we’re working to repair this instantly. As a part of our AI rules ai.google/accountability, we design our picture era capabilities to replicate our international consumer base, and we take illustration and bias significantly. We’ll proceed to do that for open ended prompts (photographs of an individual strolling a canine are common!) Historic contexts have extra nuance to them and we are going to additional tune to accommodate that. That is a part of the alignment course of – iteration on suggestions.”