Endor Labs is trying to help companies select more secure open source models with the release of Endor Scores for AI Models.

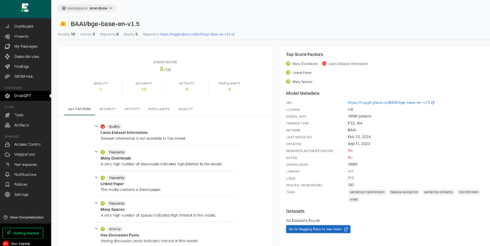

The new scoring system evaluates AI models on Hugging Face using 50 metrics related to security, popularity, quality, and activity. The company had previously developed a scoring system for open source packages in general, which included 150 checks, such as whether it has corporate sponsorship, multiple maintainers, number of releases in the last 60 days, or if it has known vulnerabilities. Endor Scores for AI Models builds off of that work and narrows the checks to AI specific concerns.

Developers can search for models via questions rather than needing to seek out particular models. Example prompts, according to Endor Labs, include “What models can I use to classify sentiments? What are the most popular models from Meta? And what is a popular model for voice in Hugging Face?”

According to the company, the AI landscape currently is like the “Wild West of technology development,” and while platforms like Hugging Face make it easier to find and adopt AI models, this convenience might also lead to security issues, either in the models themselves or the models they rely on.

“It’s always been our mission to secure everything your code depends on, and AI models are the next great frontier in that critical task,” said Varun Badhwar, co-founder and CEO of Endor Labs. “Every organization is experimenting with AI models, whether to power particular applications or build entire AI-based businesses. Security has to keep pace, and there’s a rare opportunity here to start clean, and avoid risks and high maintenance costs down the road.”

AI scores are now available for all Endor Labs customers, or non-customers can sign up for a free 30 day trial to get access to the service.