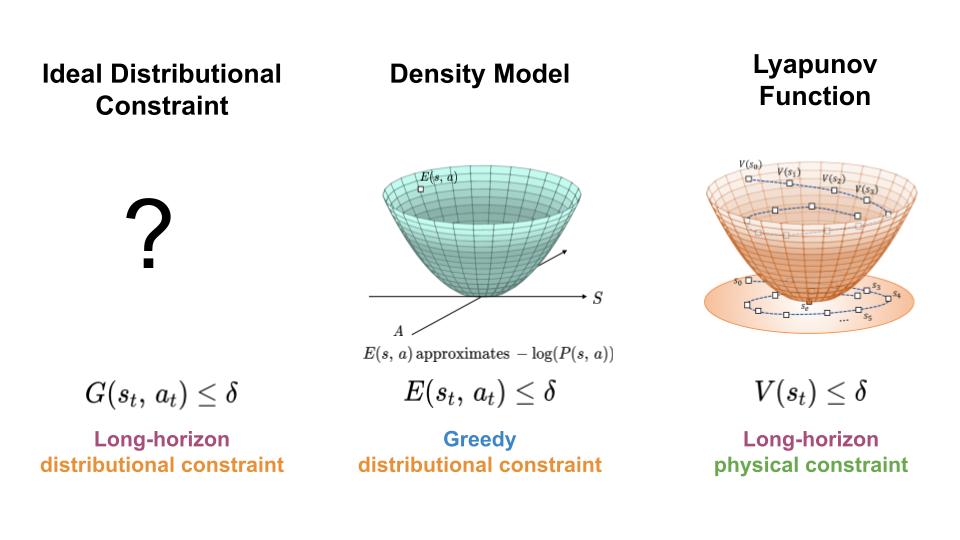

To control the distribution shift expertise by learning-based controllers, we search a mechanism for constraining the agent to areas of excessive knowledge density all through its trajectory (left). Right here, we current an method which achieves this aim by combining options of density fashions (center) and Lyapunov capabilities (proper).

To be able to make use of machine studying and reinforcement studying in controlling actual world methods, we should design algorithms which not solely obtain good efficiency, but additionally work together with the system in a protected and dependable method. Most prior work on safety-critical management focuses on sustaining the security of the bodily system, e.g. avoiding falling over for legged robots, or colliding into obstacles for autonomous automobiles. Nonetheless, for learning-based controllers, there may be one other supply of security concern: as a result of machine studying fashions are solely optimized to output right predictions on the coaching knowledge, they’re vulnerable to outputting inaccurate predictions when evaluated on out-of-distribution inputs. Thus, if an agent visits a state or takes an motion that could be very totally different from these within the coaching knowledge, a learning-enabled controller could “exploit” the inaccuracies in its discovered part and output actions which might be suboptimal and even harmful.

To stop these potential “exploitations” of mannequin inaccuracies, we suggest a brand new framework to cause concerning the security of a learning-based controller with respect to its coaching distribution. The central concept behind our work is to view the coaching knowledge distribution as a security constraint, and to attract on instruments from management idea to manage the distributional shift skilled by the agent throughout closed-loop management. Extra particularly, we’ll talk about how Lyapunov stability might be unified with density estimation to provide Lyapunov density fashions, a brand new sort of security “barrier” perform which can be utilized to synthesize controllers with ensures of conserving the agent in areas of excessive knowledge density. Earlier than we introduce our new framework, we are going to first give an outline of current strategies for guaranteeing bodily security through barrier perform.

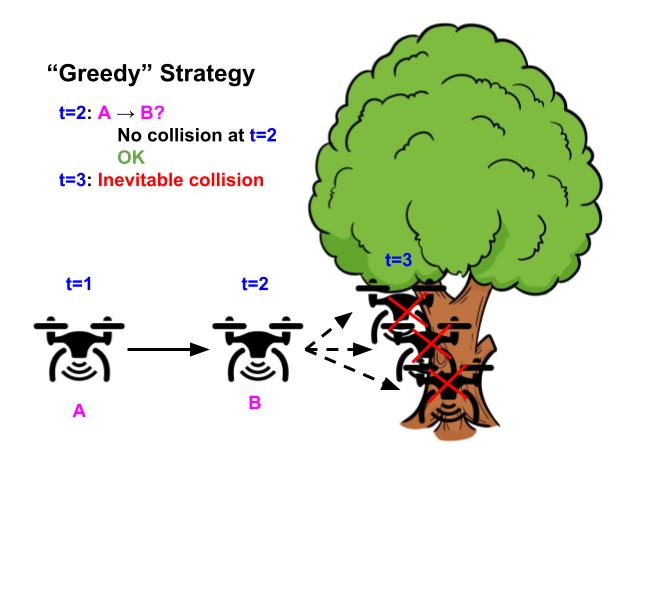

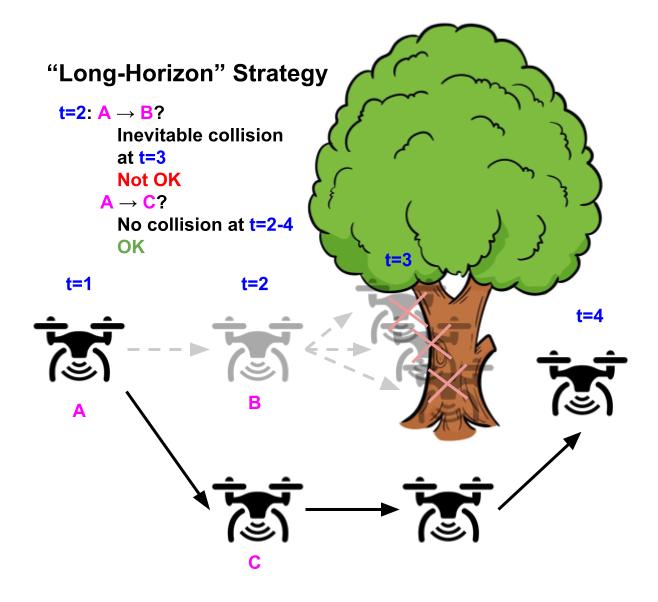

In management idea, a central subject of research is: given recognized system dynamics, $s_{t+1}=f(s_t, a_t)$, and recognized system constraints, $s in C$, how can we design a controller that’s assured to maintain the system throughout the specified constraints? Right here, $C$ denotes the set of states which might be deemed protected for the agent to go to. This downside is difficult as a result of the desired constraints have to be glad over the agent’s whole trajectory horizon ($s_t in C$ $forall 0leq t leq T$). If the controller makes use of a easy “grasping” technique of avoiding constraint violations within the subsequent time step (not taking $a_t$ for which $f(s_t, a_t) notin C$), the system should find yourself in an “irrecoverable” state, which itself is taken into account protected, however will inevitably result in an unsafe state sooner or later whatever the agent’s future actions. To be able to keep away from visiting these “irrecoverable” states, the controller should make use of a extra “long-horizon” technique which includes predicting the agent’s whole future trajectory to keep away from security violations at any level sooner or later (keep away from $a_t$ for which all attainable ${ a_{hat{t}} }_{hat{t}=t+1}^H$ result in some $bar{t}$ the place $s_{bar{t}} notin C$ and $t<bar{t} leq T$). Nonetheless, predicting the agent’s full trajectory at each step is extraordinarily computationally intensive, and infrequently infeasible to carry out on-line throughout run-time.

Illustrative instance of a drone whose aim is to fly as straight as attainable whereas avoiding obstacles. Utilizing the “grasping” technique of avoiding security violations (left), the drone flies straight as a result of there’s no impediment within the subsequent timestep, however inevitably crashes sooner or later as a result of it might’t flip in time. In distinction, utilizing the “long-horizon” technique (proper), the drone turns early and efficiently avoids the tree, by contemplating your entire future horizon way forward for its trajectory.

Management theorists deal with this problem by designing “barrier” capabilities, $v(s)$, to constrain the controller at every step (solely permit $a_t$ which fulfill $v(f(s_t, a_t)) leq 0$). To be able to make sure the agent stays protected all through its whole trajectory, the constraint induced by barrier capabilities ($v(f(s_t, a_t))leq 0$) prevents the agent from visiting each unsafe states and irrecoverable states which inevitably result in unsafe states sooner or later. This technique basically amortizes the computation of trying into the long run for inevitable failures when designing the security barrier perform, which solely must be finished as soon as and might be computed offline. This manner, at runtime, the coverage solely must make use of the grasping constraint satisfaction technique on the barrier perform $v(s)$ with the intention to guarantee security for all future timesteps.

The blue area denotes the of states allowed by the barrier perform constraint, $ v(s) leq 0$. Utilizing a “long-horizon” barrier perform, the drone solely must greedily be certain that the barrier perform constraint $v(s) leq 0$ is glad for the subsequent state, with the intention to keep away from security violations for all future timesteps.

Right here, we used the notion of a “barrier” perform as an umbrella time period to explain numerous totally different sorts of capabilities whose functionalities are to constrain the controller with the intention to make long-horizon ensures. Some particular examples embrace management Lyapunov capabilities for guaranteeing stability, management barrier capabilities for guaranteeing basic security constraints, and the worth perform in Hamilton-Jacobi reachability for guaranteeing basic security constraints beneath exterior disturbances. Extra just lately, there has additionally been some work on studying barrier capabilities, for settings the place the system is unknown or the place barrier capabilities are troublesome to design. Nonetheless, prior works in each conventional and learning-based barrier capabilities are primarily centered on making ensures of bodily security. Within the subsequent part, we are going to talk about how we will lengthen these concepts to manage the distribution shift skilled by the agent when utilizing a learning-based controller.

To stop mannequin exploitation attributable to distribution shift, many learning-based management algorithms constrain or regularize the controller to stop the agent from taking low-likelihood actions or visiting low chance states, as an illustration in offline RL, model-based RL, and imitation studying. Nonetheless, most of those strategies solely constrain the controller with a single-step estimate of the info distribution, akin to the “grasping” technique of conserving an autonomous drone protected by stopping actions which causes it to crash within the subsequent timestep. As we noticed within the illustrative figures above, this technique just isn’t sufficient to ensure that the drone is not going to crash (or go out-of-distribution) in one other future timestep.

How can we design a controller for which the agent is assured to remain in-distribution for its whole trajectory? Recall that barrier capabilities can be utilized to ensure constraint satisfaction for all future timesteps, which is precisely the sort of assure we hope to make with reference to the info distribution. Primarily based on this commentary, we suggest a brand new sort of barrier perform: the Lyapunov density mannequin (LDM), which merges the dynamics-aware side of a Lyapunov perform with the data-aware side of a density mannequin (it’s in actual fact a generalization of each kinds of perform). Analogous to how Lyapunov capabilities retains the system from changing into bodily unsafe, our Lyapunov density mannequin retains the system from going out-of-distribution.

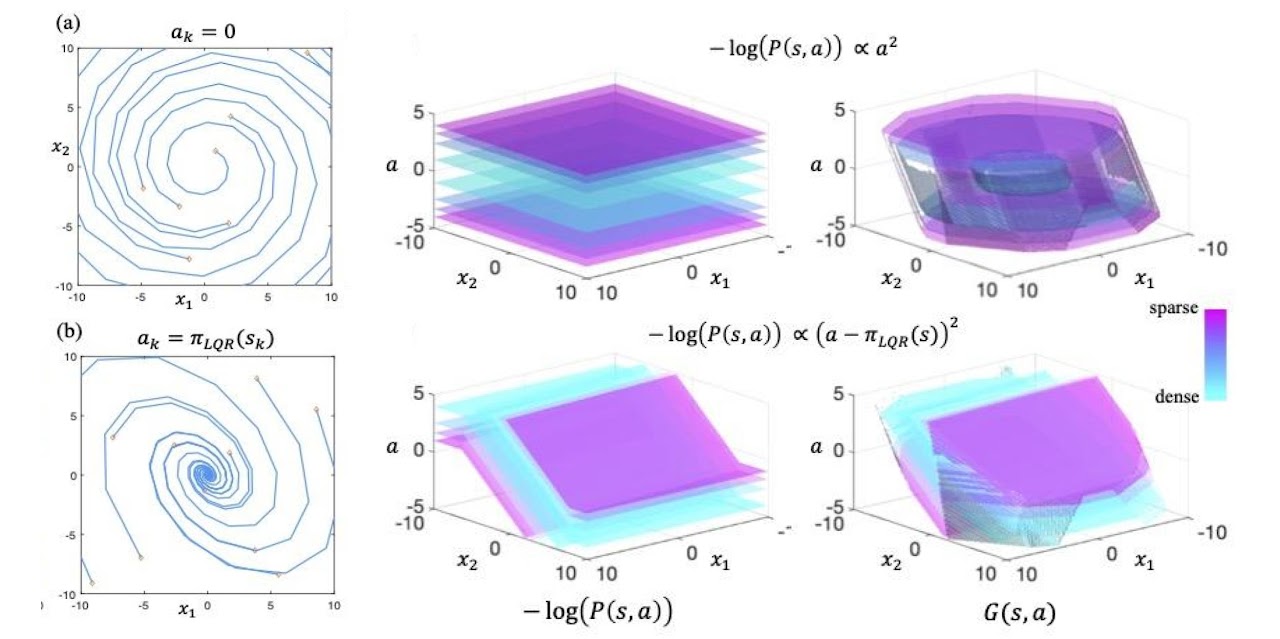

An LDM ($G(s, a)$) maps state and motion pairs to damaging log densities, the place the values of $G(s, a)$ characterize the most effective knowledge density the agent is ready to keep above all through its trajectory. It may be intuitively considered a “dynamics-aware, long-horizon” transformation on a single-step density mannequin ($E(s, a)$), the place $E(s, a)$ approximates the damaging log chance of the info distribution. Since a single-step density mannequin constraint ($E(s, a) leq -log(c)$ the place $c$ is a cutoff density) may nonetheless permit the agent to go to “irrecoverable” states which inevitably causes the agent to go out-of-distribution, the LDM transformation will increase the worth of these “irrecoverable” states till they turn into “recoverable” with respect to their up to date worth. Because of this, the LDM constraint ($G(s, a) leq -log(c)$) restricts the agent to a smaller set of states and actions which excludes the “irrecoverable” states, thereby making certain the agent is ready to stay in excessive data-density areas all through its whole trajectory.

Instance of knowledge distributions (center) and their related LDMs (proper) for a 2D linear system (left). LDMs might be considered as “dynamics-aware, long-horizon” transformations on density fashions.

How precisely does this “dynamics-aware, long-horizon” transformation work? Given an information distribution $P(s, a)$ and dynamical system $s_{t+1} = f(s_t, a_t)$, we outline the next because the LDM operator: $mathcal{T}G(s, a) = max{-log P(s, a), min_{a’} G(f(s, a), a’)}$. Suppose we initialize $G(s, a)$ to be $-log P(s, a)$. Beneath one iteration of the LDM operator, the worth of a state motion pair, $G(s, a)$, can both stay at $-log P(s, a)$ or improve in worth, relying on whether or not the worth at the most effective state motion pair within the subsequent timestep, $min_{a’} G(f(s, a), a’)$, is bigger than $-log P(s, a)$. Intuitively, if the worth at the most effective subsequent state motion pair is bigger than the present $G(s, a)$ worth, which means that the agent is unable to stay on the present density stage no matter its future actions, making the present state “irrecoverable” with respect to the present density stage. By rising the present the worth of $G(s, a)$, we’re “correcting” the LDM such that its constraints wouldn’t embrace “irrecoverable” states. Right here, one LDM operator replace captures the impact of trying into the long run for one timestep. If we repeatedly apply the LDM operator on $G(s, a)$ till convergence, the ultimate LDM can be freed from “irrecoverable” states within the agent’s whole future trajectory.

To make use of an LDM in management, we will prepare an LDM and learning-based controller on the identical coaching dataset and constrain the controller’s motion outputs with an LDM constraint ($G(s, a)) leq -log(c)$). As a result of the LDM constraint prevents each states with low density and “irrecoverable” states, the learning-based controller will have the ability to keep away from out-of-distribution inputs all through the agent’s whole trajectory. Moreover, by selecting the cutoff density of the LDM constraint, $c$, the person is ready to management the tradeoff between defending in opposition to mannequin error vs. flexibility for performing the specified activity.

Instance analysis of ours and baseline strategies on a hopper management activity for various values of constraint thresholds (x- axis). On the correct, we present instance trajectories from when the brink is just too low (hopper falling over attributable to extreme mannequin exploitation), good (hopper efficiently hopping in the direction of goal location), or too excessive (hopper standing nonetheless attributable to over conservatism).

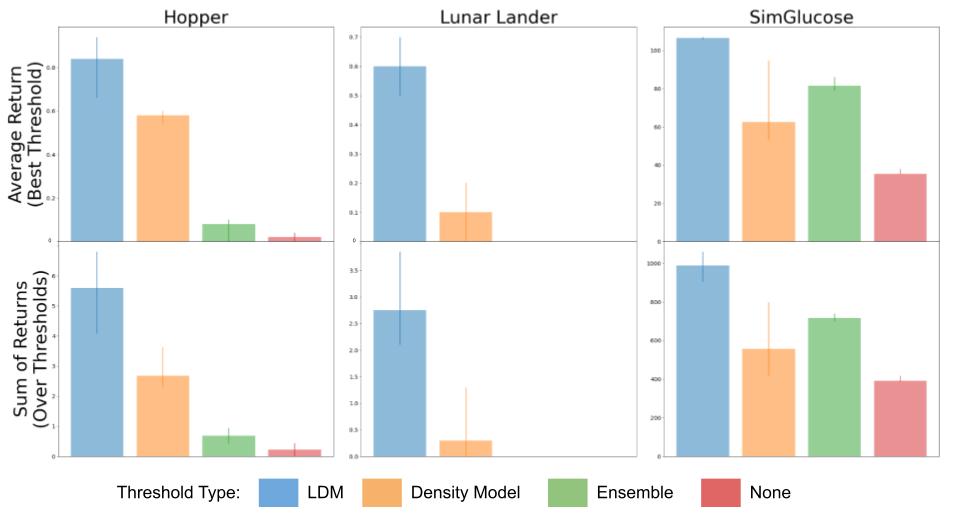

Thus far, we now have solely mentioned the properties of a “excellent” LDM, which might be discovered if we had oracle entry to the info distribution and dynamical system. In observe, although, we approximate the LDM utilizing solely knowledge samples from the system. This causes an issue to come up: although the function of the LDM is to stop distribution shift, the LDM itself can even undergo from the damaging results of distribution shift, which degrades its effectiveness for stopping distribution shift. To grasp the diploma to which the degradation occurs, we analyze this downside from each a theoretical and empirical perspective. Theoretically, we present even when there are errors within the LDM studying process, an LDM constrained controller continues to be capable of preserve ensures of conserving the agent in-distribution. Albeit, this assure is a bit weaker than the unique assure offered by an ideal LDM, the place the quantity of degradation will depend on the dimensions of the errors within the studying process. Empirically, we approximate the LDM utilizing deep neural networks, and present that utilizing a discovered LDM to constrain the learning-based controller nonetheless supplies efficiency enhancements in comparison with utilizing single-step density fashions on a number of domains.

Analysis of our methodology (LDM) in comparison with constraining a learning-based controller with a density mannequin, the variance over an ensemble of fashions, and no constraint in any respect on a number of domains together with hopper, lunar lander, and glucose management.

At the moment, one of many largest challenges in deploying learning-based controllers on actual world methods is their potential brittleness to out-of-distribution inputs, and lack of ensures on efficiency. Conveniently, there exists a big physique of labor in management idea centered on making ensures about how methods evolve. Nonetheless, these works normally deal with making ensures with respect to bodily security necessities, and assume entry to an correct dynamics mannequin of the system in addition to bodily security constraints. The central concept behind our work is to as a substitute view the coaching knowledge distribution as a security constraint. This enables us to make use of those concepts in controls in our design of learning-based management algorithms, thereby inheriting each the scalability of machine studying and the rigorous ensures of management idea.

This publish relies on the paper “Lyapunov Density Fashions: Constraining Distribution Shift in Studying-Primarily based Management”, introduced at ICML 2022. You

discover extra particulars in our paper and on our web site. We thank Sergey Levine, Claire Tomlin, Dibya Ghosh, Jason Choi, Colin Li, and Homer Walke for his or her precious suggestions on this weblog publish.