Maintaining with an business as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a useful roundup of current tales on the earth of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

This week in AI, the information cycle lastly (lastly!) quieted down a bit forward of the vacation season. However that’s to not counsel there was a dearth to jot down about, a blessing and a curse for this sleep-deprived reporter.

A specific headline from the AP caught my eye this morning: “AI image-generators are being skilled on express images of youngsters.” The gist of the story is, LAION, a knowledge set used to coach many standard open supply and industrial AI picture turbines, together with Steady Diffusion and Imagen, comprises hundreds of pictures of suspected baby sexual abuse. A watchdog group based mostly at Stanford, the Stanford Web Observatory, labored with anti-abuse charities to establish the unlawful materials and report the hyperlinks to regulation enforcement.

Now, LAION, a nonprofit, has taken down its coaching information and pledged to take away the offending supplies earlier than republishing it. However incident serves to underline simply how little thought is being put into generative AI merchandise because the aggressive pressures ramp up.

Due to the proliferation of no-code AI mannequin creation instruments, it’s turning into frightfully simple to coach generative AI on any information set conceivable. That’s a boon for startups and tech giants alike to get such fashions out the door. With the decrease barrier to entry, nevertheless, comes the temptation to solid apart ethics in favor of an accelerated path to market.

Ethics is tough — there’s no denying that. Combing via the hundreds of problematic pictures in LAION, to take this week’s instance, received’t occur in a single day. And ideally, creating AI ethically includes working with all related stakeholders, together with organizations who signify teams typically marginalized and adversely impacted by AI methods.

The business is stuffed with examples of AI launch choices made with shareholders, not ethicists, in thoughts. Take as an illustration Bing Chat (now Microsoft Copilot), Microsoft’s AI-powered chatbot on Bing, which at launch in contrast a journalist to Hitler and insulted their look. As of October, ChatGPT and Bard, Google’s ChatGPT competitor, had been nonetheless giving outdated, racist medical recommendation. And the newest model of OpenAI’s picture generator DALL-E reveals proof of Anglocentrism.

Suffice it to say harms are being accomplished within the pursuit of AI superiority — or at the least Wall Road’s notion of AI superiority. Maybe with the passage of the EU’s AI laws, which threaten fines for noncompliance with sure AI guardrails, there’s some hope on the horizon. However the highway forward is lengthy certainly.

Listed here are another AI tales of be aware from the previous few days:

Predictions for AI in 2024: Devin lays out his predictions for AI in 2024, relating how AI may affect the U.S. main elections and what’s subsequent for OpenAI, amongst different subjects.

Towards pseudanthropy: Devin additionally wrote suggesting that AI be prohibited from imitating human conduct.

Microsoft Copilot will get music creation: Copilot, Microsoft’s AI-powered chatbot, can now compose songs because of an integration with GenAI music app Suno.

Facial recognition out at Ceremony Support: Ceremony Support has been banned from utilizing facial recognition tech for 5 years after the Federal Commerce Fee discovered that the U.S. drugstore large’s “reckless use of facial surveillance methods” left prospects humiliated and put their “delicate info in danger.”

EU presents compute assets: The EU is increasing its plan, initially introduced again in September and kicked off final month, to help homegrown AI startups by offering them with entry to processing energy for mannequin coaching on the bloc’s supercomputers.

OpenAI provides board new powers: OpenAI is increasing its inside security processes to fend off the specter of dangerous AI. A brand new “security advisory group” will sit above the technical groups and make suggestions to management, and the board has been granted veto energy.

Q&A with UC Berkeley’s Ken Goldberg: For his common Actuator publication, Brian sat down with Ken Goldberg, a professor at UC Berkeley, a startup founder and an achieved roboticist, to speak humanoid robots and broader developments within the robotics business.

CIOs take it gradual with gen AI: Ron writes that, whereas CIOs are underneath strain to ship the sort of experiences persons are seeing once they play with ChatGPT on-line, most are taking a deliberate, cautious method to adopting the tech for the enterprise.

Information publishers sue Google over AI: A category motion lawsuit filed by a number of information publishers accuses Google of “siphon[ing] off” information content material via anticompetitive means, partly via AI tech like Google’s Search Generative Expertise (SGE) and Bard chatbot.

OpenAI inks take care of Axel Springer: Talking of publishers, OpenAI inked a take care of Axel Springer, the Berlin-based proprietor of publications together with Enterprise Insider and Politico, to coach its generative AI fashions on the writer’s content material and add current Axel Springer-published articles to ChatGPT.

Google brings Gemini to extra locations: Google built-in its Gemini fashions with extra of its services, together with its Vertex AI managed AI dev platform and AI Studio, the corporate’s software for authoring AI-based chatbots and different experiences alongside these traces.

Extra machine learnings

Definitely the wildest (and best to misread) analysis of the final week or two needs to be life2vec, a Danish research that makes use of numerous information factors in an individual’s life to foretell what an individual is like and once they’ll die. Roughly!

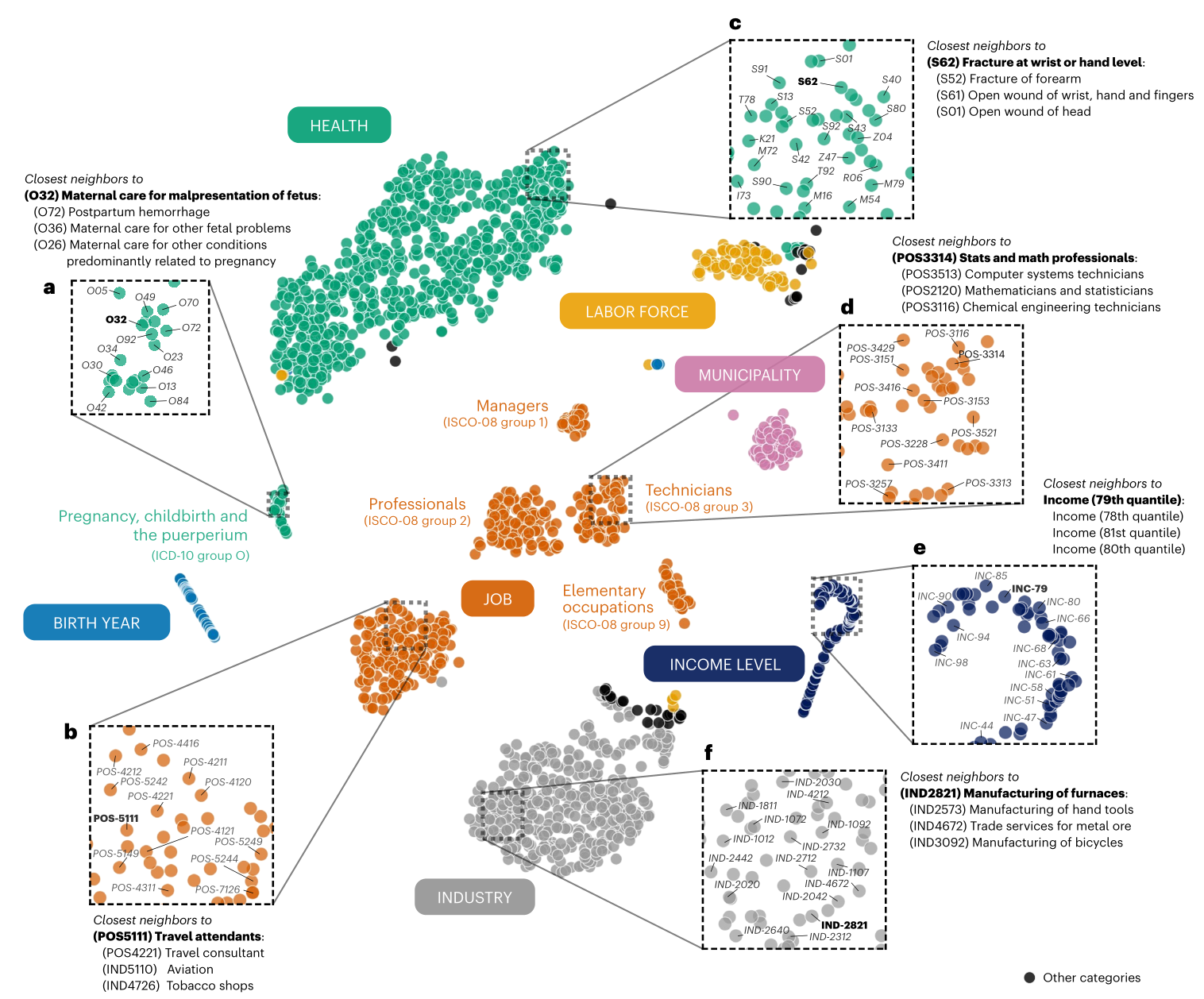

Visualization of the life2vec’s mapping of varied related life ideas and occasions.

The research isn’t claiming oracular accuracy (say that 3 times quick, by the best way) however somewhat intends to point out that if our lives are the sum of our experiences, these paths may be extrapolated considerably utilizing present machine studying strategies. Between upbringing, schooling, work, well being, hobbies, and different metrics, one could moderately predict not simply whether or not somebody is, say, introverted or extroverted, however how these components could have an effect on life expectancy. We’re not fairly at “precrime” ranges right here however you possibly can wager insurance coverage firms can’t wait to license this work.

One other large declare was made by CMU scientists who created a system known as Coscientist, an LLM-based assistant for researchers that may do loads of lab drudgery autonomously. It’s restricted to sure domains of chemistry presently, however identical to scientists, fashions like these will likely be specialists.

Lead researcher Gabe Gomes advised Nature: “The second I noticed a non-organic intelligence be capable to autonomously plan, design and execute a chemical response that was invented by people, that was wonderful. It was a ‘holy crap’ second.” Mainly it makes use of an LLM like GPT-4, effective tuned on chemistry paperwork, to establish widespread reactions, reagents, and procedures and carry out them. So that you don’t want to inform a lab tech to synthesize 4 batches of some catalyst — the AI can do it, and also you don’t even want to carry its hand.

Google’s AI researchers have had an enormous week as properly, diving into just a few fascinating frontier domains. FunSearch could sound like Google for youths, however it truly is brief for operate search, which like Coscientist is ready to make and assist make mathematical discoveries. Apparently, to stop hallucinations, this (like others just lately) use a matched pair of AI fashions quite a bit just like the “outdated” GAN structure. One theorizes, the opposite evaluates.

Whereas FunSearch isn’t going to make any ground-breaking new discoveries, it could take what’s on the market and hone or reapply it in new locations, so a operate that one area makes use of however one other is unaware of could be used to enhance an business customary algorithm.

StyleDrop is a useful software for individuals seeking to replicate sure types through generative imagery. The difficulty (because the researcher see it) is that when you’ve got a method in thoughts (say “pastels”) and describe it, the mannequin can have too many sub-styles of “pastels” to tug from, so the outcomes will likely be unpredictable. StyleDrop allows you to present an instance of the model you’re pondering of, and the mannequin will base its work on that — it’s mainly super-efficient fine-tuning.

Picture Credit: Google

The weblog put up and paper present that it’s fairly sturdy, making use of a method from any picture, whether or not it’s a photograph, portray, cityscape or cat portrait, to every other sort of picture, even the alphabet (notoriously onerous for some motive).

Google can also be shifting alongside within the generative online game with VideoPoet, which makes use of an LLM base (like every thing else as of late… what else are you going to make use of?) to do a bunch of video duties, turning textual content or pictures to video, extending or stylizing current video, and so forth. The problem right here, as each venture makes clear, will not be merely making a collection of pictures that relate to 1 one other, however making them coherent over longer durations (like greater than a second) and with massive actions and adjustments.

Picture Credit: Google

VideoPoet strikes the ball ahead, it appears, although as you possibly can see the outcomes are nonetheless fairly bizarre. However that’s how these items progress: first they’re insufficient, then they’re bizarre, then they’re uncanny. Presumably they go away uncanny in some unspecified time in the future however nobody has actually gotten there but.

On the sensible aspect of issues, Swiss researchers have been making use of AI fashions to snow measurement. Usually one would depend on climate stations, however these may be far between and now we have all this pretty satellite tv for pc information, proper? Proper. So the ETHZ staff took public satellite tv for pc imagery from the Sentinel-2 constellation, however as lead Konrad Schindler places it, “Simply trying on the white bits on the satellite tv for pc pictures doesn’t instantly inform us how deep the snow is.”

In order that they put in terrain information for the entire nation from their Federal Workplace of Topography (like our USGS) and skilled up the system to estimate not simply based mostly on white bits in imagery but additionally floor reality information and tendencies like soften patterns. The ensuing tech is being commercialized by ExoLabs, which I’m about to contact to be taught extra.

A phrase of warning from Stanford, although — as highly effective as functions just like the above are, be aware that none of them contain a lot in the best way of human bias. In terms of well being, that instantly turns into an enormous drawback, and well being is the place a ton of AI instruments are being examined out. Stanford researchers confirmed that AI fashions propagate “outdated medical racial tropes.” GPT-4 doesn’t know whether or not one thing is true or not, so it could and does parrot outdated, disproved claims about teams, equivalent to that black individuals have decrease lung capability. Nope! Keep in your toes when you’re working with any sort of AI mannequin in well being and drugs.

Lastly, right here’s a brief story written by Bard with a taking pictures script and prompts, rendered by VideoPoet. Be careful, Pixar!